Kubernetes explained: the theme park analogy: auto scaling, taints and affinities

More on explaining Kubernetes and theme parks: scaling, taints and affinities.

In the previous post we covered quite a bit of ground explaining the Kubernetes concepts using a theme park analogy: containers, pods, deployments, containerPorts, cpu and memory resources, probes, services, ingresses, labels, nodes and node pool.

On this post, we will continue with the theme park analogy talking about auto scaling, taints and affinities.

You can also read the next post about Kubernetes StatefulSets.

Success!

As it couldn’t be otherwise, KubePark is a big success. People flood to the gates, eager to enjoy the KubePark experience, but there are just too many people: queues start to grow and grumpy visitors leave ugly reviews in popular websites.

Image attribution: http://www.thethemeparkguy.com/.

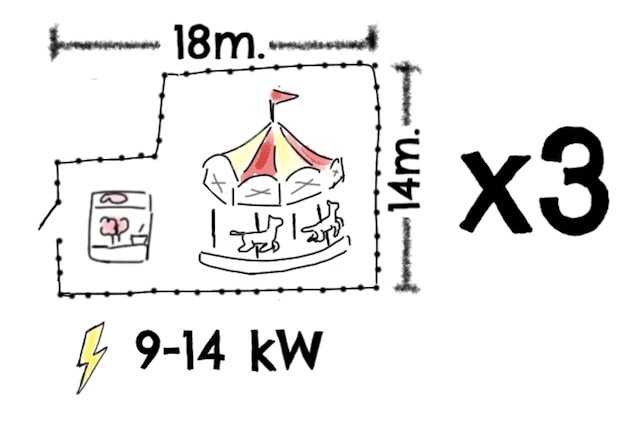

You keep the queues in check by first increasing the number of attractions in your fun ride plans, and then by also increasing the number of rented parcels when the parcels become full.

If having to fiddle everyday with the fun ride plans and parcel contracts was not bad enough, you very soon notice that the 1909 Carousel is extremely popular in the mornings but hardly used in the evenings, while the Roller Coaster is the opposite, and let’s not talk about those wild 4am Conga Lines! Also, the number of visitor on the weekends is orders of magnitude bigger than on workdays.

You are just wasting a ton of money and time.

To solve the issue, you devise an additional set of instructions (one per attraction) for your control crew, so that if the average usage of all clones of an attraction is high, the control crew will install more of those attractions, removing them when the usage is low (k8s pod horizontal autoscaling). The usage can be measured in different ways, but you decide that the power consumption (k8s, CPU usage) is a good enough proxy to know how busy an attraction is.

On top of that, a new clause is added to the rental agreement with your landlord that will allow your control crew to rent additional parcels without your consent (up to a maximum). This way, during the weekends the park can grow, and shrink on workdays (k8s cluster autoscaler).

From now on, KubePark will always run with the minimum required resources, and with little additional effort on your side.

More attractions!

Now that KubePark is running smoothly, it is time to offer a wider range of attractions.

A market study suggests that an ice rink and a ski slope will be the next big things.

As they require snow and cold weather, you sign a new rental contract (k8s node pool) to provide you with parcels in the arctic, of size 500x200 with 250kW power, and you tag them as “plains” and “arctic weather”.

On the fun ride template, you add a new optional section: Must-have and nice-to-have parcel tags, and both the ice rink and ski slope fun ride plans include the “must-have arctic weather”. The control crew will make sure that those attractions are always installed on parcels with arctic weather (k8s node affinity).

Note attractions with no parcel requirements could be installed in the arctic parcels. Most of the time you are ok with this, the 1909 carousel looks even more beautiful with the snow. But some some special cases, like those extremely expensive zero-gravity parcels, you want to keep all attractions out, except for the ones designed explicitly for them (k8s taints and tolerances).

Trouble ahead

With the snowy parcels in mind, you start to spring new attractions: Igloo Overnights, The Penguin Race, Polar Bear Sightseeing, Naked-Man Ice Fishing Competition… but on the rush to have all these new attractions, you neglect a very important detail and calamity strikes, badly hurting KubePark’s reputation.

The following picture goes viral, but be warned, you mind find it very disturbing:

Image attribution: Lick by Kukuxumusu.

By chance, a Polar Bear Sightseeing attraction was installed next to a Penguin Race. Not a nice spectacle.

To ensure that it doesn’t happen again, the Penguin Race is tagged as yummy snacks and a new option is added to the attraction’s template so that you can specify that the Polar Bear Sightseeing must not be in the same parcel as attractions with the tag yummy snacks.

Foreseeing possible future uses, you decide to also add the options to specify must-have-attraction-with-tag-X-in-the-same-parcel, nice-to-have-attraction-with-tag-X-in-the-same-parcel and nice-to-NOT-have-attraction-with-tag-X-in-the-same-parcel (k8s pod affinity and anti-affinity).

Your control crew will need to hire, at the very least, a Tetris World Champion.

Is that all?

You wish again!

We can read about Replication, Daemon and Stateful sets, Persistent Volumes, Jobs and Cron Jobs, Secrets, Config Maps in the next post.